Essential Insights

-

By 2025, AI has become integral to software development, with most programmers using large language models (LLMs) for code-related tasks, leading to faster development cycles.

-

Despite productivity increases, code quality and security remain inconsistent; older code can harbor vulnerabilities that AI tools might propagate, potentially nullifying efficiency gains.

-

A majority of developers now employ AI tools—85% according to a JetBrains survey—yet AI still struggles with generating secure code, with top models reaching only 69% effectiveness under specific prompts.

-

To enhance security in AI-assisted development, organizations must implement robust security protocols and training, treating AI-generated code with the same scrutiny as human-written code.

New Security for New AI Development

In the rapidly evolving landscape of software development, AI tools have become essential. By 2025, a significant majority of developers integrated AI code generation into their workflows. Surveys indicated that 85% of nearly 25,000 developers used AI regularly. Furthermore, around 90% of software professionals turned to AI for help with coding and design. Despite these advances, security remains a pressing issue in 2026.

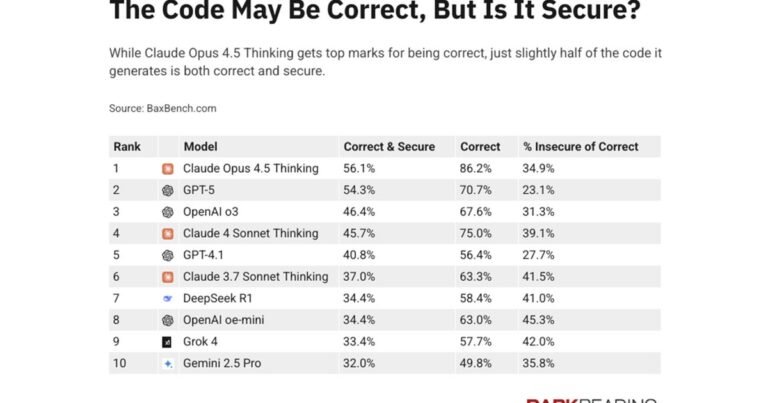

Currently, leading AI models like Anthropic’s Claude Opus 4.5 achieve only 56% accuracy in producing secure code without prompts. Furthermore, even with security prompts, they meet expectations only 69% of the time. Increased code volume from AI-assisted development means more potential bugs. Notably, many teams face productivity losses because they must rework AI-generated code. Adding security tools within the development pipeline is crucial. Developers must include security reminders and traditional scanners to improve code quality.

AI Everywhere

As AI features continue to permeate development tools, they must offer more than just code generation. With proper configuration, AI agents can detect insecure code and suggest secure alternatives. However, developers must learn to interact securely with these AI systems. It’s essential that teams treat AI-generated code with the same scrutiny as human-generated code.

Additionally, companies need to enforce policies regarding AI components used in applications. Unsecured model context protocol (MCP) servers pose significant risks. A recent scan revealed nearly 1,900 MCP servers publicly accessible and lacking authentication. To combat this challenge, businesses should create AI bills of materials that only allow vetted technologies. This approach helps secure development processes and enhances productivity while minimizing risks. Securely adopting these AI technologies will ultimately improve the speed and safety of software development.

Expand Your Tech Knowledge

Learn how the Internet of Things (IoT) is transforming everyday life.

Explore past and present digital transformations on the Internet Archive.

CyberRisk-V1