Fast Facts

- The critical security vulnerabilities in AI agents exist primarily in the action layer, where agents interact with external systems via APIs, triggering potentially risky actions beyond the model’s control.

- Current AI security efforts focus too narrowly on the model layer, neglecting the action layer, which is where most real-world breaches, like the McKinsey incident, occur through API calls and infrastructure exploitation.

- Effective security at the action layer requires comprehensive visibility across all connected systems, including APIs, MCP servers, and infrastructure, along with behavioral baselines and ongoing real-time monitoring.

- Securing the action layer is essential because most damage, including data leaks and unauthorized transactions, occurs after the AI’s decision-making, emphasizing the need for a holistic, full-stack security approach.

The Issue

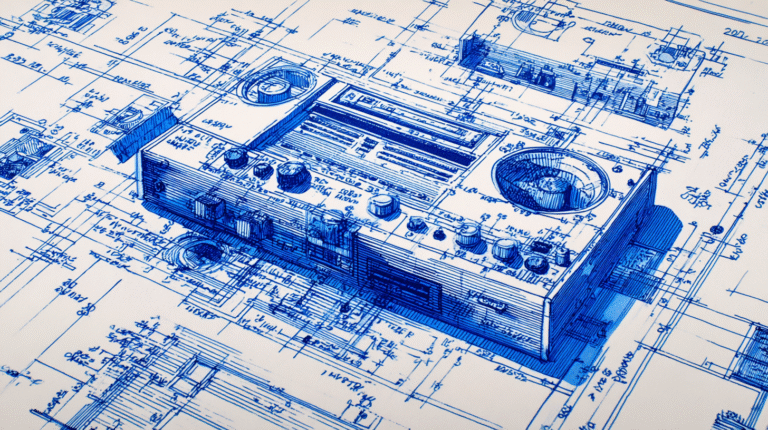

The story centers on the increasing risks associated with AI agents, particularly at the action layer, which has been largely overlooked by security measures. Originally, AI security focused on protecting the model layer, where prompts and data leakage were primary concerns. However, as AI agents evolved to act autonomously—calling APIs, triggering workflows, and interacting with various systems—the security landscape expanded beyond the model. These actions happen in the infrastructure, making vulnerabilities less visible and harder to monitor, as evidenced by incidents like the McKinsey breach, where attackers exploited API pathways fed by AI. The article reports that most security efforts are insufficient because they neglect to oversee the full scope of agent activity across interconnected layers, from APIs and MCP servers down to live infrastructure. Salt Security emphasizes the importance of monitoring this action layer comprehensively, utilizing their Agentic Security Graph to visualize real-time risks and behaviors, aiming to prevent breaches that could occur despite strong prompt security measures. This shift in focus underscores a critical need for organizations to see and secure the entire operational environment of AI agents before an attack happens.

What’s at Stake?

The issue “Everyone Is Securing the Wrong Layer of AI” can directly threaten your business’s security and growth. If your company only focuses on protecting the underlying hardware or software, attackers can still harness AI’s power through data or algorithms. This oversight means your sensitive information, proprietary models, or decision-making processes remain vulnerable. As AI integrates deeper into operations, neglecting its core, such as training data or AI logic, leaves critical gaps unprotected. Consequently, your business could face breaches, intellectual property loss, or sabotage, all leading to financial damage and reputational harm. Therefore, without securing the right AI layers, your defenses become superficial, making your entire enterprise exposed to new, sophisticated threats.

Possible Next Steps

In the rapidly evolving landscape of artificial intelligence, timely remediation is crucial because addressing vulnerabilities too late can lead to exploitations, data breaches, and loss of trust. When organizations focus on securing the wrong layer of AI, they risk leaving critical weaknesses unaddressed, which can be exploited by malicious actors, undermining overall security and operational continuity.

Proper Layer Focus

- Conduct comprehensive risk assessments to identify which AI components need protection.

- Prioritize security controls for data management, model training, deployment, and monitoring layers appropriately.

Holistic Security Approach

- Implement defense-in-depth strategies tailored specifically to AI systems, covering data integrity, model robustness, and access controls.

- Regularly update and patch AI frameworks and infrastructure to mitigate known vulnerabilities.

Training & Awareness

- Educate staff on the architecture of AI systems to ensure security efforts target the correct layers.

- Foster collaboration between AI developers, security teams, and operations to maintain cohesive security measures.

Continuous Monitoring

- Deploy real-time monitoring tools to detect unauthorized access or unusual activity within AI components.

- Establish incident response plans specifically for AI-related security incidents to enable swift action.

Governance & Policies

- Develop and enforce policies that explicitly define security responsibilities across all AI layers.

- Ensure compliance with standards such as those from NIST CSF, emphasizing risk management and corrective actions at the appropriate layers.

Continue Your Cyber Journey

Discover cutting-edge developments in Emerging Tech and industry Insights.

Understand foundational security frameworks via NIST CSF on Wikipedia.

Disclaimer: The information provided may not always be accurate or up to date. Please do your own research, as the cybersecurity landscape evolves rapidly. Intended for secondary references purposes only.

Cyberattacks-V1cyberattack-v1-multisource